Crawl Budget Explained for Large Websites

Table of Contents

FAQ

What is the crawl budget in SEO?

Crawl budget is the number of pages a search engine bot crawls on a website during a specific time period.

Does the crawl budget affect small websites?

Usually not. Crawl budget mainly affects large websites with thousands of pages.

How can I check crawl activity?

You can check crawl data using the Crawl Stats report in Google Search Console.

What wastes the crawl budget?

What is the crawl budget in SEO?

Crawl budget is the number of pages a search engine bot crawls on a website during a specific time period.

Does the crawl budget affect small websites?

Usually not. Crawl budget mainly affects large websites with thousands of pages.

How can I check crawl activity?

You can check crawl data using the Crawl Stats report in Google Search Console.

How do XML sitemaps help crawl budget?

XML sitemaps guide search engines to important pages, helping them crawl content more efficiently.

Does website speed affect crawl budget?

Yes. Faster websites allow search engines to crawl more pages without overloading the server.

Search engines discover and index web pages through a process called crawling. Every day, search engine bots visit millions of websites to collect information and update their indexes. However, these bots do not crawl every page on every website equally. Instead, they allocate a limited amount of resources to each site. This limit is known as the crawl budget.

For small websites, crawl budget usually isn’t a serious concern. But for large websites with thousands or millions of pages, crawl budget management becomes critical. If search engines fail to crawl important pages regularly, those pages may not appear in search results or may remain outdated in the index.

In this article, you will learn what crawl budget is, how it works, why it matters for large websites, and how to optimize it to improve your site’s search visibility.

What Is a Crawl Budget?

Crawl budget refers to the number of pages a search engine bot crawls on your website within a certain time frame.

Search engines like Google use automated programs called crawlers or bots (such as Googlebot) to scan web pages. However, crawling the entire internet continuously would require enormous computing resources. Because of this, search engines limit how many pages they crawl from each website.

In simple terms:

Crawl Budget = The number of URLs Googlebot is willing and able to crawl on your website during a given period.

For example:

- A small blog with 50 pages might have all pages crawled frequently.

- A large e-commerce site with 500,000 pages may only have a portion crawled daily.

This is why large websites must manage their crawl budget efficiently.

Why Crawl Budget Matters

For most websites with fewer than a few hundred pages, crawl budget isn’t a major issue. But for large sites, it can affect indexing, ranking, and overall SEO performance.

Here are the main reasons to crawl budget matters.

1. Faster Indexing of New Pages

If your crawl budget is used efficiently, search engines can discover and index new pages quickly.

For example:

A news website publishes 100 articles daily. If the crawl budget is limited and poorly managed, Google may crawl only some of them, leaving others unindexed.

2. Updated Content Gets Re-Indexed Faster

When you update existing content, search engines need to crawl the page again to detect changes.

Efficient crawl budget allocation ensures updated pages are recrawled quickly.

3. Important Pages Receive Priority

Without proper crawl management, search engines may waste time crawling unimportant pages like:

- Duplicate pages

- Filter URLs

- Tag pages

- Old archives

This can prevent important pages from being crawled.

4. Better SEO Performance

When search engines crawl the right pages regularly, your website can perform better in search rankings.

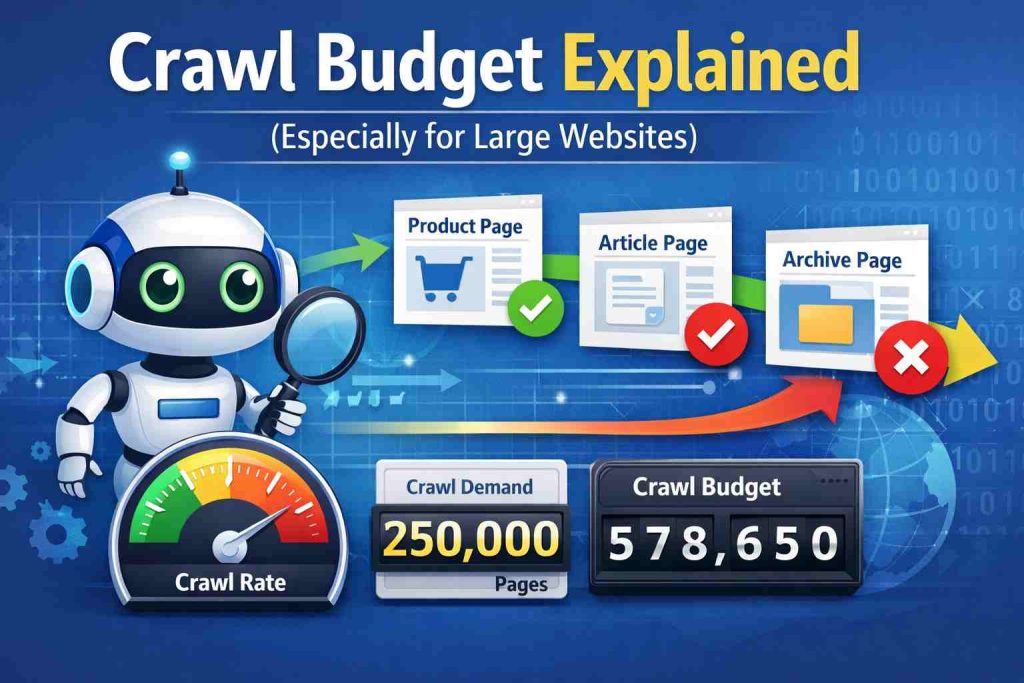

Crawl Budget vs Crawl Rate vs Crawl Demand

To understand the crawl budget properly, it’s important to know the two main factors that influence it.

Crawl Rate Limit

Crawl rate refers to how many requests Googlebot can make to your site without overloading the server.

If your server is slow or frequently returns errors, Google will reduce the crawl rate.

Factors affecting crawl rate:

- Server performance

- Page load speed

- Server errors

- Hosting quality

Example:

If a website frequently returns 500 server errors, Googlebot may crawl fewer pages to avoid causing additional stress.

Crawl Demand

Crawl demand refers to how much Google wants to crawl your site.

If your website is popular and frequently updated, Google will crawl it more often.

Factors affecting crawl demand include:

- Website popularity

- Fresh content

- Backlinks

- Content updates

Example:

A major news site like BBC publishes new content constantly. Because of this, search engines crawl it very frequently.

Crawl Budget Formula (Simplified)

In simple terms:

Crawl Budget = Crawl Rate Limit + Crawl Demand

Both factors determine how often and how deeply Googlebot crawls your website.

Why Crawl Budget Is Especially Important for Large Websites

Large websites often face crawl budget challenges because they have thousands or millions of URLs.

Examples include:

- E-commerce websites

- Large blogs

- News portals

- Marketplace platforms

- Forums

These websites typically contain many types of pages, such as:

- Product pages

- Category pages

- Pagination pages

- Filter URLs

- Duplicate URLs

If search engines spend crawl resources on low-value pages, important pages may remain un crawled.

Common Crawl Budget Problems

Many websites unintentionally waste crawl budget. Here are the most common issues.

1. Duplicate Content

Duplicate URLs confuse search engines and waste crawl resources.

Example:

example.com/product

example.com/product?ref=homepage

example.com/product?color=red

All three URLs may show the same content.

If search engines crawl each version, the crawl budget gets wasted.

2. Parameter URLs

Dynamic parameters often generate thousands of URL variations.

Example:

example.com/shoes?size=9

example.com/shoes?size=10

example.com/shoes?size=11

If search engines crawl all variations, the crawl budget gets exhausted quickly.

3. Broken Links

Broken pages cause search engines to crawl URLs that no longer exist.

Example:

404 error pages

These waste crawl resources and hurt user experience.

4. Infinite Spaces

Some websites generate infinite URLs through filters or calendar pages.

Example:

example.com/calendar/2020

example.com/calendar/2021

example.com/calendar/2022

Search engines may continue crawling endless variations.

5. Low-Quality Pages

Thin or low-value content pages also consume a crawl budget unnecessarily.

Examples:

- Empty category pages

- Tag archives

- Old outdated posts

How to Check Your Crawl Budget

You can analyze crawl activity using Google Search Console.

Steps:

- Open Google Search Console

- Navigate to Settings

- Click Crawl Stats

Here you can see:

- Total crawl requests

- Response codes

- File types crawled

- Googlebot activity

This data helps you understand how Google interacts with your website.

Strategies to Optimize Crawl Budget

If you run a large website, optimizing crawl budget can improve indexing and SEO performance.

Here are the most effective techniques.

1. Improve Website Speed

Fast websites allow search engines to crawl more pages efficiently.

Ways to improve speed:

- Use caching

- Optimize images

- Use CDN

- Minimize JavaScript

- Improve hosting

Example:

If a page loads in 0.8 seconds instead of 3 seconds, Googlebot can crawl more pages within the same time.

2. Fix Broken Links

Regularly audit your website to find and fix broken links.

Tools you can use:

- Screaming Frog

- Ahrefs

- SEMrush

- Google Search Console

Fixing these links ensures crawlers focus on valid pages.

3. Use XML Sitemaps

XML sitemaps help search engines discover important pages.

A good sitemap should:

- Include important URLs

- Exclude duplicate pages

- Be regularly updated

Example:

Large e-commerce websites often create multiple sitemaps:

sitemap-products.xml

sitemap-categories.xml

sitemap-blog.xml

This helps search engines crawl content efficiently.

4. Use Robots.txt to Block Low-Value Pages

The robots.txt file can prevent crawlers from accessing unnecessary URLs.

Example:

Disallow: /wp-admin/

Disallow: /search/

Disallow: /tag/

Blocking these pages saves crawl budget for important content.

5. Manage URL Parameters

If your website generates many parameter URLs, configure them carefully.

You can:

- Use canonical tags

- Avoid unnecessary parameters

- Use parameter handling in Search Console

Example:

<link rel=”canonical” href=”https://example.com/shoes”>

This tells search engines which URL version should be indexed.

Use canonical tags or redirects to consolidate duplicate pages.

Example:

Redirect:

example.com/page?ref=twitter

→ example.com/page

This prevents duplicate crawling.

7. Improve Internal Linking

Strong internal linking helps search engines discover important pages.

Example structure:

Homepage

→ Category Page

→ Subcategory

→ Product Page

This hierarchical structure improves crawl efficiency.

8. Update Content Regularly

Websites that update content frequently attract more crawling.

Examples of updates:

- New blog posts

- Updated statistics

- Fresh case studies

Search engines prioritize fresh content.

Crawl Budget Optimization Example

Imagine a large online store with 200,000 product pages.

Problems:

- 50,000 filter URLs

- 20,000 duplicate URLs

- 10,000 broken pages

Googlebot wastes crawl resources on these pages.

After optimization:

- Filter pages blocked via robots.txt

- Duplicate pages canonicalized

- Broken links fixed

Result:

Googlebot now focuses on real product pages, improving indexing and search visibility.

Crawl Budget Myths

There are many misconceptions about the crawl budget.

Myth 1: Crawl Budget Affects Small Websites

Most small websites with fewer than 1,000 pages don’t need to worry about crawl budget.

Myth 2: More Pages Means More Traffic

Publishing thousands of low-quality pages can actually harm crawl efficiency.

Quality matters more than quantity.

Myth 3: Blocking Pages Always Saves Crawl Budget

Blocking pages in robots.txt doesn’t always stop crawling completely if other pages link to them.

Best Practices Summary

To manage crawl budget effectively:

- Improve server performance

- Fix broken links

- Remove duplicate pages

- Use XML sitemaps

- Optimize internal linking

- Block low-value URLs

- Update content regularly

These steps ensure search engines crawl the pages that matter most.

Conclusion

Crawl budget is an essential concept in technical SEO, particularly for large websites with thousands or millions of pages. Search engines have limited resources, so they must decide which pages to crawl and how often.

If your crawl budget is wasted on duplicate URLs, broken pages, or low-value content, important pages may remain un-crowled or unindexed.

By improving site performance, fixing technical issues, optimizing internal linking, and guiding search engines with sitemaps and robots.txt, you can ensure your crawl budget is used effectively.

For large websites, proper crawl budget management can significantly improve indexing speed, search visibility, and overall SEO performance.